CART

0

0

YOUR CART

Pay cash discounted price and save

Pay cash discounted price and save

| Video Memory Specifications | ||

| Type | HBM3e | |

| Size | 80GB |

|

| Resolution | Compute-focused GPU (no direct display output) | |

| Core Clock | 1.41 GHz (base) | |

| Memory Clock | 2,039 MHz effective bandwidth | |

| BUS Type | PCIe Gen4 / NVLink | |

| Memory Interface | 5120-bit effective | |

| CUDA Cores | 6912 | |

| Others | Supports ECC memory for high reliability | |

| Power Specifications | ||

| Recommended PSU | 750W+ per system | |

| Consumption | 400W TDP | |

| Display Option | ||

| Multi Display | Not for display outputs, supports computational workloads | |

| Application Programming Interfaces | ||

| OpenGL | Limited; mainly CUDA / AI / HPC frameworks |

|

| DirectX | N/A (compute-focused) | |

| Physical Specifications | ||

| Dimensions | ~112mm x 194mm (SXM form factor) |

|

| Others | Dual NVLink connectors for multi-GPU scaling |

|

| Warranty | ||

| Manufacturing Warranty | 2 Years | |

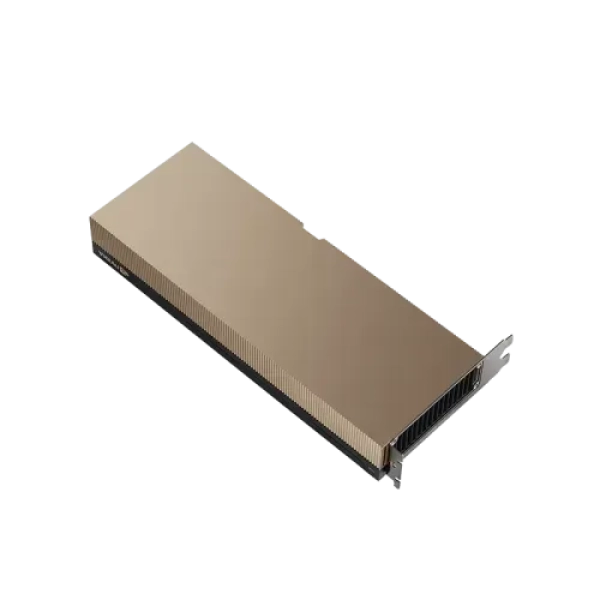

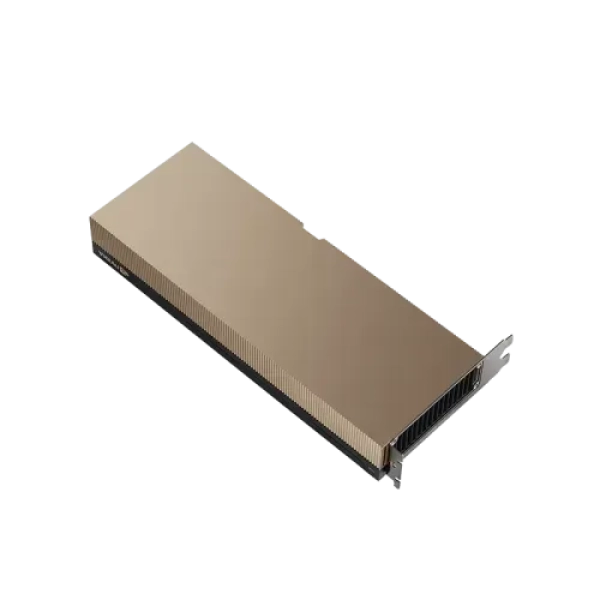

Enterprise-grade GPU built for AI training, deep learning, and high-performance computing with massive 80GB memory and extreme bandwidth

The NVIDIA A100 80GB PCIe/SXM Graphics Card is one of the most powerful data center GPUs designed for artificial intelligence, machine learning, and high-performance computing workloads. Built on NVIDIA’s Ampere architecture, it delivers exceptional acceleration for modern computational tasks.

With 80GB of HBM2e memory, this GPU can handle extremely large datasets and complex AI models with ease. Its memory bandwidth exceeds 1.9 TB/s, allowing rapid data movement and improved processing efficiency for demanding applications.

The A100 introduces Multi-Instance GPU (MIG) technology, enabling the GPU to be divided into multiple independent instances. This allows multiple users or workloads to run simultaneously with guaranteed performance, making it highly efficient for cloud and enterprise environments.

In terms of performance, the A100 delivers massive improvements over previous generations, with up to 20x higher performance in AI workloads. It supports a wide range of precision formats including FP32, FP16, BFLOAT16, and INT8, ensuring flexibility across different applications.

The PCIe version offers versatility for standard servers, while the SXM version provides higher performance and power efficiency in specialized systems like NVIDIA HGX platforms. Both variants are widely used in data centers for AI model training, deep learning inference, scientific simulations, and big data analytics.

This GPU is not intended for typical gaming or consumer use. Instead, it is built for enterprise-level computing where extreme performance, scalability, and reliability are required.

Q1: Is the NVIDIA A100 suitable for gaming?

No, it is designed for data centers, AI, and HPC workloads, not gaming.

Q2: What is the difference between PCIe and SXM versions?

PCIe is more flexible for standard servers, while SXM offers higher performance and power efficiency in specialized systems.

Q3: How much memory does the A100 have?

It comes with 80GB of HBM2e high-speed memory.

Q4: What is MIG technology?

MIG allows the GPU to be divided into multiple instances so multiple workloads can run simultaneously.

Q5: What are the main use cases of this GPU?

AI training, deep learning inference, scientific computing, and big data analytics.

Get specific details about this product from customers who own it.

| Video Memory Specifications | ||

| Type | HBM3e | |

| Size | 80GB |

|

| Resolution | Compute-focused GPU (no direct display output) | |

| Core Clock | 1.41 GHz (base) | |

| Memory Clock | 2,039 MHz effective bandwidth | |

| BUS Type | PCIe Gen4 / NVLink | |

| Memory Interface | 5120-bit effective | |

| CUDA Cores | 6912 | |

| Others | Supports ECC memory for high reliability | |

| Power Specifications | ||

| Recommended PSU | 750W+ per system | |

| Consumption | 400W TDP | |

| Display Option | ||

| Multi Display | Not for display outputs, supports computational workloads | |

| Application Programming Interfaces | ||

| OpenGL | Limited; mainly CUDA / AI / HPC frameworks |

|

| DirectX | N/A (compute-focused) | |

| Physical Specifications | ||

| Dimensions | ~112mm x 194mm (SXM form factor) |

|

| Others | Dual NVLink connectors for multi-GPU scaling |

|

| Warranty | ||

| Manufacturing Warranty | 2 Years | |

Enterprise-grade GPU built for AI training, deep learning, and high-performance computing with massive 80GB memory and extreme bandwidth

The NVIDIA A100 80GB PCIe/SXM Graphics Card is one of the most powerful data center GPUs designed for artificial intelligence, machine learning, and high-performance computing workloads. Built on NVIDIA’s Ampere architecture, it delivers exceptional acceleration for modern computational tasks.

With 80GB of HBM2e memory, this GPU can handle extremely large datasets and complex AI models with ease. Its memory bandwidth exceeds 1.9 TB/s, allowing rapid data movement and improved processing efficiency for demanding applications.

The A100 introduces Multi-Instance GPU (MIG) technology, enabling the GPU to be divided into multiple independent instances. This allows multiple users or workloads to run simultaneously with guaranteed performance, making it highly efficient for cloud and enterprise environments.

In terms of performance, the A100 delivers massive improvements over previous generations, with up to 20x higher performance in AI workloads. It supports a wide range of precision formats including FP32, FP16, BFLOAT16, and INT8, ensuring flexibility across different applications.

The PCIe version offers versatility for standard servers, while the SXM version provides higher performance and power efficiency in specialized systems like NVIDIA HGX platforms. Both variants are widely used in data centers for AI model training, deep learning inference, scientific simulations, and big data analytics.

This GPU is not intended for typical gaming or consumer use. Instead, it is built for enterprise-level computing where extreme performance, scalability, and reliability are required.

Q1: Is the NVIDIA A100 suitable for gaming?

No, it is designed for data centers, AI, and HPC workloads, not gaming.

Q2: What is the difference between PCIe and SXM versions?

PCIe is more flexible for standard servers, while SXM offers higher performance and power efficiency in specialized systems.

Q3: How much memory does the A100 have?

It comes with 80GB of HBM2e high-speed memory.

Q4: What is MIG technology?

MIG allows the GPU to be divided into multiple instances so multiple workloads can run simultaneously.

Q5: What are the main use cases of this GPU?

AI training, deep learning inference, scientific computing, and big data analytics.

Get specific details about this product from customers who own it.